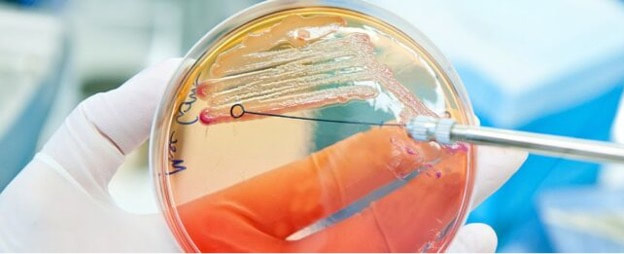

Besides eliminating disease-causing invaders in the human body, a single course of this life-saving medication can have an “immense“ effect on the gut and the microorganisms that live there.

Certain bacteria or fungi may occasionally overgrow because of this influence. For example, following antibiotic treatment, women may have a 30% chance of acquiring a yeast infection.

Researchers at the University of Illinois in Urbana-Champaign are developing a remedy. Lolamicin is a novel antibiotic that has been found to target selectively gram-negative infections, sparing other bacteria.

Although there is still a long way to go until the medication is tested on humans, scientists are optimistic that it can act as a model for the creation of new antibiotics.

Gram-negative bacteria are notoriously hard to eradicate and are frequent causes of infections in the bladder, blood, lungs, and intestines. Currently, one of the most serious risks to global human health is their resistance to antibiotics.

Gram-positive and gram-negative bacteria can both be eliminated by broad-spectrum antibiotics. However, because gram-negative bacteria are more likely to be resistant to our current antibiotics, scientists believe it is imperative to develop medications that can target them specifically. This increases the likelihood that more bacteria beneficial to human health will survive.

A medication such as Lolamicin might be the solution. Lolamicin defeated 130 drug-resistant strains of common gram-negative bacteria, including K. pneumoniae, E. coli, and E. cloacae, in laboratory dishes. This medication was able to eradicate every single strain of drug-resistant bacteria, where many other antibiotics could not.

Lolamicin also effectively treated blood infections and acute pneumonia in living rodents, all the while protecting the gut microbiome.

The medication had “no effect on non-pathogenic gram-negative commensal bacteria or on gram-positive bacteria” that were living in the mice.

That’s an exciting finding, considering that the diversity of microbial species inhabiting the human gut can rapidly decline after even a brief treatment of antibiotics, and this can last for months before things return to normal.

Although the effects of those modifications on health are poorly understood, administering some antibiotics can make a patient more susceptible to secondary infections.

But Lolamicin isn’t like that. This novel drug does “not cause any substantial changes” to the gut microbiome of mice in the month following therapy, in contrast to gram-positive-only clindamycin and broad-spectrum antibiotic amoxicillin.

During this period, mice treated with Lolamicin were exposed to Clostridium difficile, a bacterial illness that frequently occurs in the colon after the use of antibiotics.

Those mice treated with Lolamicin did not develop C. difficile infections at nearly the same rate as those treated with clindamycin or amoxicillin.

The creation of an antibiotic that spares the microbiome could save lives, since the United States alone accounts for some 500,000 cases of C. difficile infections annually, of which 30,000 are fatal.

To make sure that infections do not eventually develop resistance to Lolamicin, scientists are currently refining their research.

“The intestinal microbiome is central to maintaining host health, and its perturbation can cause many deleterious effects, including C. difficile infection and beyond,” the authors conclude.

“Pathogen-specific antibiotics such as Lolamicin will be critical to minimizing collateral damage to the gut microbiome; this microbiome-sparing effect would make such antibiotics superior for patients compared with antibiotics in current clinical practice.”

RSS Feed

RSS Feed